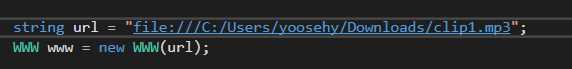

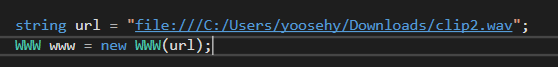

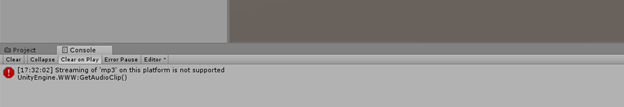

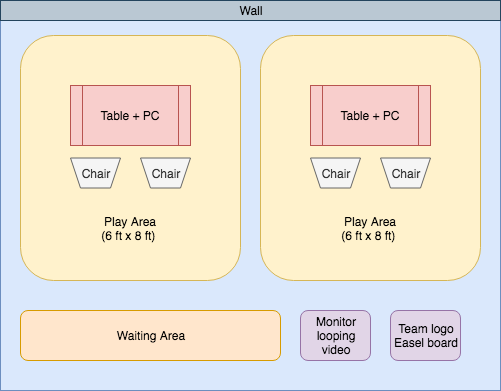

Summary After an enjoyable Thanksgiving break, we’re back at work on our app and working to finalize it, since final demos are only two weeks way. Regarding our app’s drawing experience, we improved it in two ways. First, we switched from a square brush stroke to a circular one since it’s a lot more natural to draw with a circular brush. Second, we resolved the issue where moving the brush very quickly while drawing was resulting in a sparse, dotted path instead of connected one. Additionally, we began investigating sending audio files from the PC and playing them in our app. Something else we worked on was a controller-mounted menuing system. Having menus will enable users to change drawing settings (e.g., stroke size and color), and also allow them to create new whiteboards. We also improved window manipulation by making it so windows auto-attach to planar and near-planar surfaces (both physical and virtual) when dragged over one. Finally, we worked on planning for the final demo event by creating a demo plan. Progress The first drawing issue we worked on this week was switching from a square brush stroke to a circular one. The reason we used squares to begin with is because Unity’s Texture2D class we are using only lets you either set a single pixel at a time or set all the pixels in an axis-aligned rectangular region all at once. The former is bad performance-wise if you’re setting a bunch of pixels, so we used the latter. But the latter is challenging for circles, since you don’t actually want to set all the pixels--just the ones that are part of the circle. If you set the others to have completely transparent color values, it won’t blend with their existing value--it just replaces them with a completely transparent pixel. We realized this week that Texture2D has a method for getting a pixel’s current value though, so what we do now for any pixel that’s outside the circle but inside the bounding axis-aligned rectangle is get its current value and just set it to that same color. The second drawing issue we worked on this week was connecting the discrete points drawn using linear interpolation, since drawing very quickly was making the path drawn appear very sparse and dotted-like. We started out with a simple approach of using a linear equation of the form “y = mx + b” to fill in the missing pixel values (i.e., for each x value between the two endpoints, draw the point (x, mx+b), where m is determined based on the endpoints). However, this approach failed to consider steep lines (i.e., lines with slopes greater than 1). For example, if the user draws a completely vertical line, the slope of the line connecting the endpoints is actually undefined, but we didn’t consider that initially, so we were dividing by zero, which was a problem. Furthermore, even if the line isn’t completely vertical, a sufficiently steep line (slope greater than 1) causes problems: For every x value between the endpoints, you might in general need to have more than one point to avoid sparseness. We solved this by figuring out how many points above and below (x, mx+b) each x value should have so that each y value between the endpoints is included. The resulting algorithm took care of all the edge cases, and is also compatible with any stroke size (i.e., not just a stroke size of 1px). We also began investigating how to play audio files in augmented reality. We built a music player prefab that contains a play button, volume level, and a seek bar that displays the current time in the song. For the playing the audio, we used the AudioSource GameObject together with an AudioClip in Unity. A major problem we faced with transferring audio files from the desktop to the Magic Leap headset was the format of the audio file sent by the server. Our current server architecture is designed such that we get access to a byte array of the files sent over the server. However, it was not simple to instantiate a AudioClip object from just a byte array. This led us to take an alternative approach. We decided to save the byte array as a file again on the Magic Leap’s disk space. Once we can successfully save files to the headset disk, we can utilize the WWW class to load the file and convert it to an AudioClip easily. At first we had trouble figuring out the current working directory and the file hierarchy of the Magic Leap disk space during runtime. The next problem we faced was WWW not supporting the loading of mp3 audio files from a disk space. We are still investigating the cause of this error and searching for more alternative ways to load audio files as an AudioClip. Something else we’ve been working on since the last blog post is menus for our app. One reason we need menus of some kind is because there’s currently no way to create new whiteboards. The app starts up with one in the scene already, but we’d rather not do that even, since it’s location depends on the coordinate system, which has a different origin and orientation every time the headset is turned on. As such, we’d like to have a menu somewhere that lets the user introduce a new whiteboard into their workspace. Another reason we need menus is for the drawing options. Drawing currently supports different stroke sizes and colors, and you can switch from a brush tool to an eraser tool. But there’s no way of accessing this functionality yet, so we need to expose it through a menu. We decided that we wanted to create controller-mounted menus. They’ll appear in the same plane as the controller’s touch pad, probably several inches in front of the controller. So far, we’ve only worked on the drawing menu. It currently looks like this: As you can see, there are sections for controlling the brush color, the active drawing tool, and the tool’s stroke size. We currently have 10 brush color options, and stroke sizes can be between 1px and 20px. Our plan is for left and right swipes on the controller’s touch pad to choose the current section (which has a blue bottom edge), and to use up and down swipes to interact with the current section. The general idea is that a swipe up takes you forward and a swipe down takes you backward. Going forward would mean left-to-right then top-to-bottom for the brush color section, left-to-right for the active drawing tool section, and increasing stroke size for the tool stroke size section. We plan on using the same system of menus for introducing new whiteboards (and clocks) into the workspace, but will save the details for next week, since we still need to design the menu. Regarding window manipulation, we worked on getting windows to attach to surfaces this week. First of all, we had to decide what should trigger the auto-attachment of a window onto a surface. Like we talked about last time, it should involve some kind of collision. So we decided that auto-attachment should happen as soon as a window collides a surface. Additionally, the window should be repositioned and reoriented so that it lies flat on the surface. Figuring out how to accomplish lying the window flat required some math. We had to calculate the minimal distance and rotation that could be applied to a plane to get it flat on the surface. Once we figured out the math, the rest of the implementation was relatively straightforward since we already implemented the collision detection last week. But after we finished the first attempt at auto-attachment and begin testing it out, we soon found a significant drawback with the implementation: auto-attachment can happen only after the user lets go of the window (i.e., released the controller’s trigger). This is because when a window is being dragged, its position and orientation are controlled by a script that places the window based off the position and orientation of the controller. Therefore, the auto-attachment script can control the window’s position and orientation only after the user releases the trigger. Unfortunately, this makes for an unintuitive user experience in which the window lies flat only after the user lets go of it--after they let go of the window, they probably aren’t expecting it to move. To solve this issue, we needed to integrate auto-attachment with dragging so a window can auto-attach while the user drags it. To do this, we had to add additional logic to the dragging script to keep it from controlling the position and orientation of the window in the usual way (which is based solely on the controller) when a collision is happening with the window. Instead, the position and orientation of the window should be determined both by the controller and the surface it collided with during a collision. The window should reorient to be parallel to the surface and reposition to be on the surface, but the controller motions should still be able to move the window around on the surface and rotate within the surface. We modified our implementation to account for these details, and the result ended up working well and having a pretty natural user experience. Finally, we created our initial demo plan this week. We will have two demo stations in a 16 foot by 12 foot area. Here is a sketch of our floor plan layout: If you want to view the rest of our plan, you can download it here: The demo will be on Dec 13th from 4:00 pm to 6:30 pm at the atrium of the Paul G. Allen Center for Computer Science & Engineering. Plan for next week Next week is our last week to work on this project before final demos week, so we’ll continue finalizing our project and preparing for demo day. In part, that means we’ll be preparing for the event itself (creating a demo video, creating a team banner, etc.), but we’ll also need to work on the app. One thing we need to do is try to find and fix bugs in our app. For instance, we haven’t yet given thought to what happens if a corrupted file is transfered to the headset (e.g., something the user says is a PNG but isn’t). And we noticed that pressing buttons other than the trigger while drawing causes lines to be drawn, which wasn’t something we did intentionally, so we need to make sure our event handlers are restricted to their intended events. We also need to merge in the menuing system which was developed in a separate project from our main app. We also need to design and add in the menus for creating new whiteboards--currently, we’ve only given attention to the drawing menus. Also, the current file transfer experience is a little weird in that the files just show up somewhere in space, and if you transfer multiple at once, they appear in the same spot. We might change it up a little so that only the first file immediately appears in space, and there’s some kind of menu option for bringing the rest in one at a time. Regarding new features, we have several potential use cases for haptic feedback in mind, so we’d like to make use of the Magic Leap Controller’s haptic feedback capability and implement them. We’d also like to allow resizing of objects using left and right taps on the controller’s touchpad. As far as file transfer goes, we’d like to add transfer of 3D objects. We’d also like to make the window buttons (e.g., the close button) fade away when you aren’t pointing the controller at a window. Other than that, our final stretch goal is to allow users to control their desktop pointer using the Magic Leap Controller so they don’t have to switch back and forth between it and their mouse or trackpad. Assuming network delays are small enough, the biggest issue should just be figuring out where in space the user’s screen is, and doing it accurately enough that the pointer goes where the user thinks it should.

0 Comments

|

Blog

|

RSS Feed

RSS Feed